What is Synthetic Data?

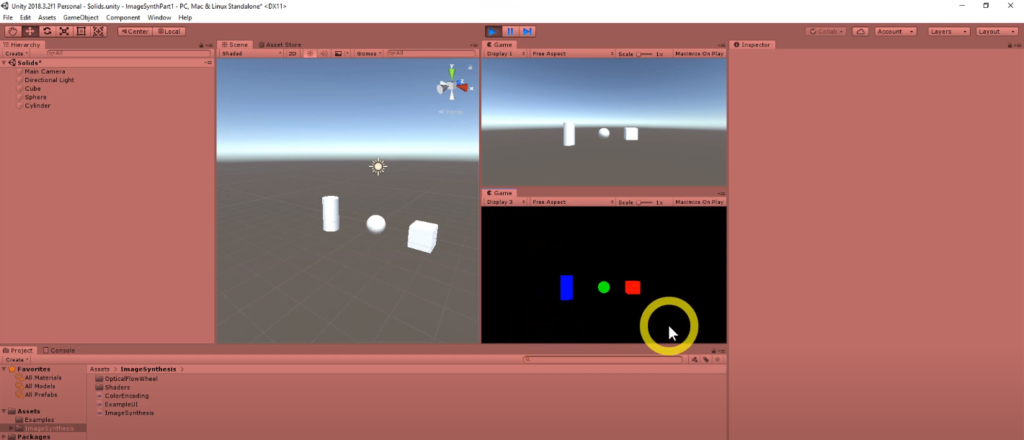

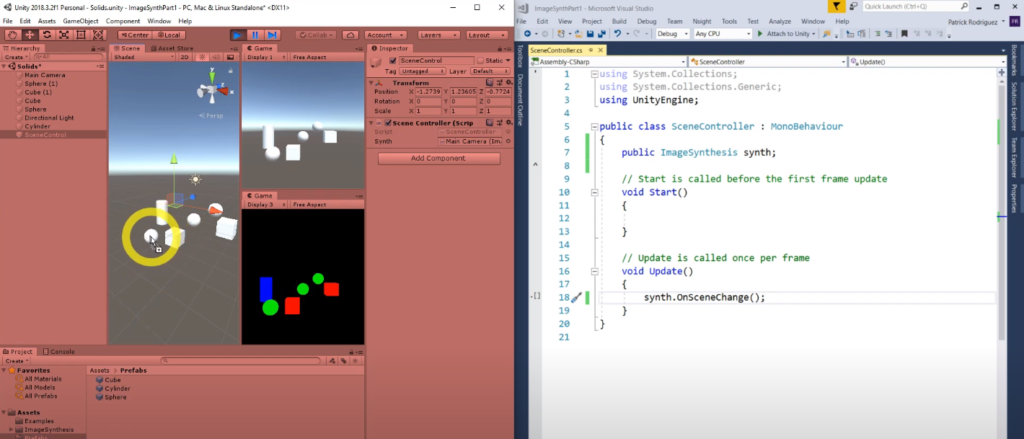

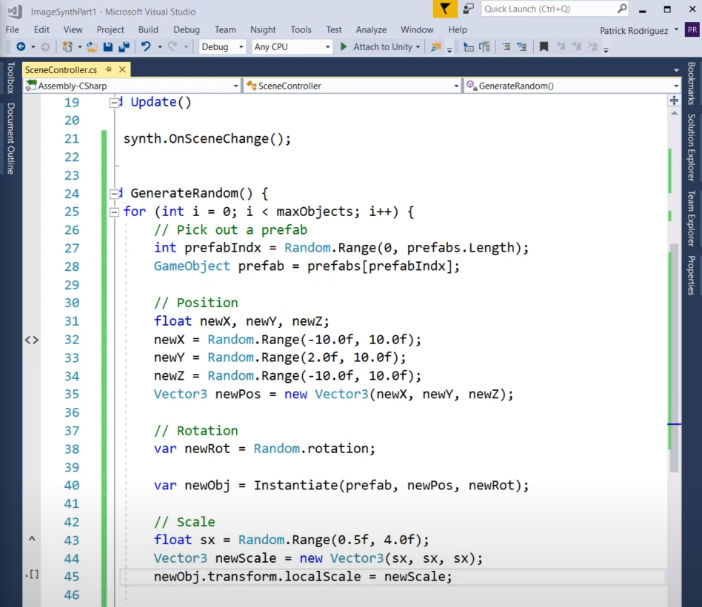

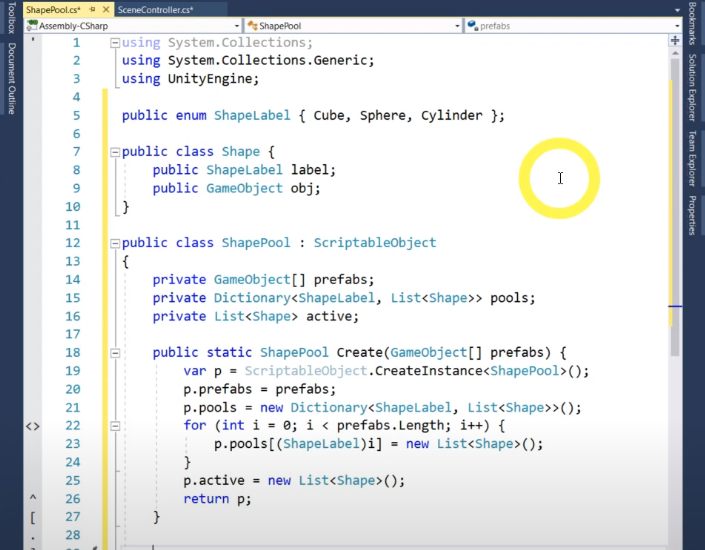

Synthetic data is a result of artificially manufacturing any sort of data – whether it be images, texts, or audio – algorithmically, rather than generating the data type by real world events. These data sets are used as a stand-in for testing production or operational data, to further corroborate mathematical models against the behaviour of the real world data and, with increasing rise in implementation, to train machine learning models.

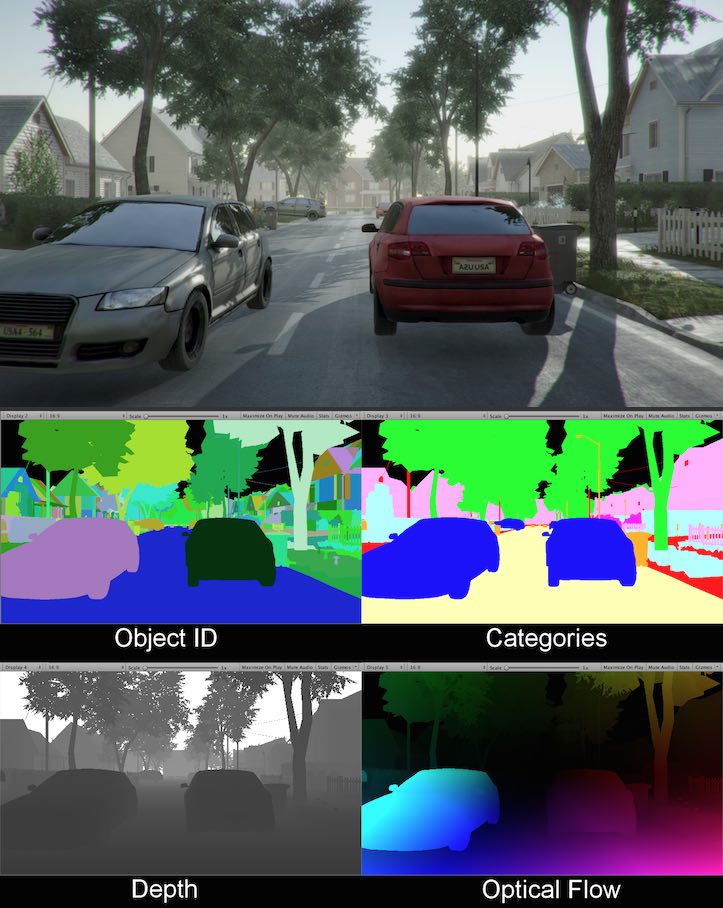

Datasets can be fully or partially synthetically generated. An image consisting of a 3D model of a car driving through a 3D environment would be considered as entirely artificial, while a 3D model of a car placed in a photograph of a location would be a partially synthetic counterpart.

Advantages and disadvantages

The main advantages to using synthetic data instead of real world data is that it is more cost effective, can be private, and can be tested efficiently. This can be applied to the auto-mobile space especially, where it can be both time consuming and costly to collect real world data.

Synthetic data can be generated by extracting away any personal information from the real dataset – such as names, license plates, and location – so as to render it completely anonymised. All personal data has been removed and the information cannot be traced back to the original source, avoiding any possibility of copyright infringement.